SpatialDDS — The Typed Spatial

Data Bus for Connected Systems

Robots, vehicles, base stations, AR/XR devices, IoT sensors, and digital twins all produce spatial data — detections, poses, maps, zones. Today each domain invents its own formats and transport. SpatialDDS provides a shared, typed, open protocol so spatial data flows across domains and operators without per-integration custom work — the interoperability layer for spatial computing.

Checks

Validated

Built

Gaps SpatialDDS Addresses

No cross-domain spatial schema

ROS 2 has sensor_msgs, V2X has BSM/CAM, IoT has custom JSON. None define typed 3D detections, map alignments, or spatial zones that work across all three.

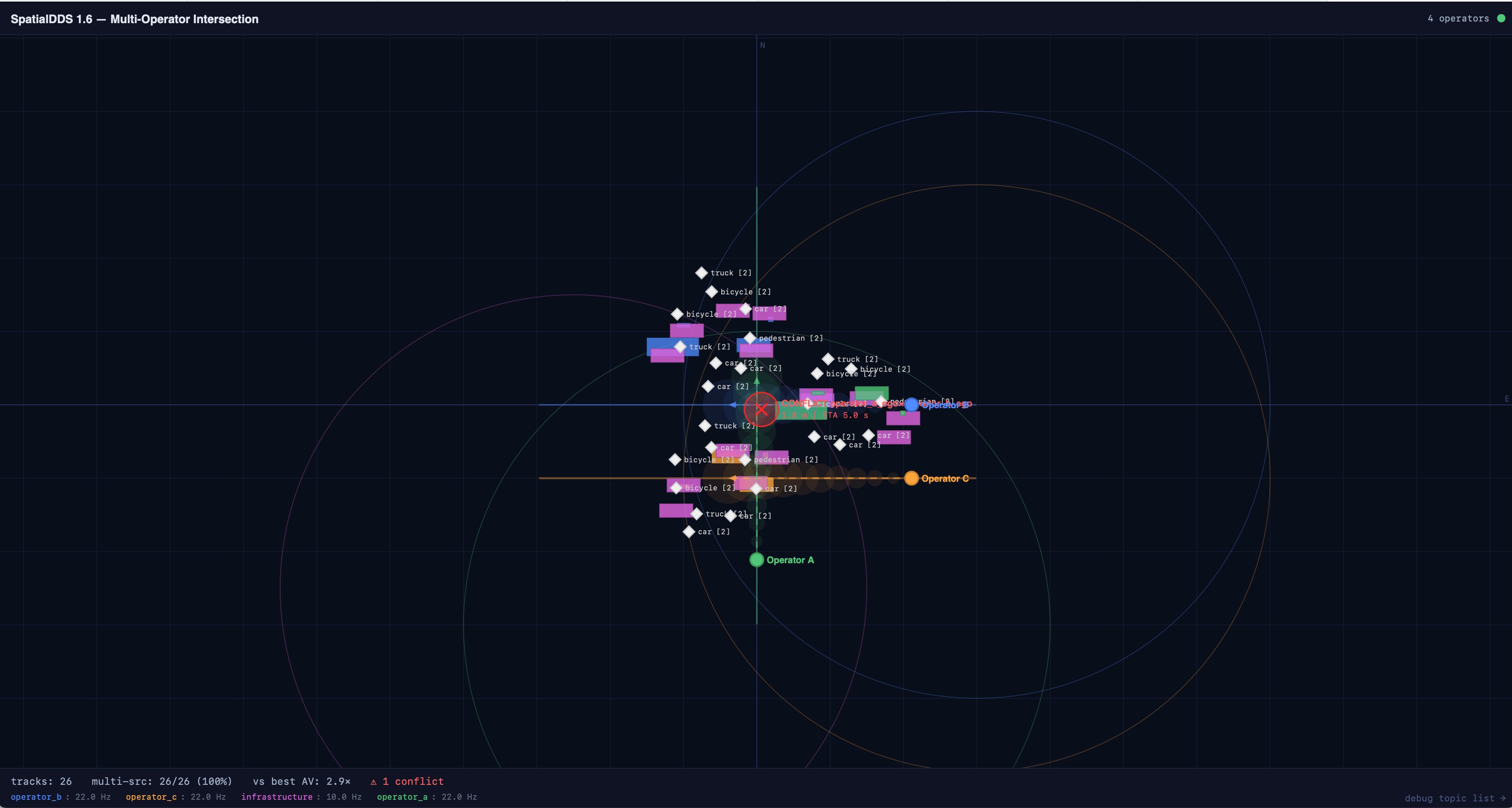

No multi-operator coordination

Existing stacks assume single-operator control. When Fleet A and Fleet B share an intersection, there's no standard for topic namespacing, provenance, or trust boundaries.

No infrastructure sensing integration

6G base stations will be sensors. No protocol connects their radar/beam observations to robot perception stacks. Custom APIs per vendor, per deployment.

No spatial discovery

New participants can't ask "who has mapped this area?" or "which sensors cover this intersection?" Discovery is either manual config or nonexistent.

What SpatialDDS Provides

Typed Spatial Messages

Schema-enforced types for every spatial concept — not opaque bytes or ad hoc JSON. Every consumer knows exactly what fields exist, what they mean, and how to interpret them.

Coordinate Frame DAG

FrameRef by UUID with transform chains. Multiple maps, multiple robots, multiple operators — all linked by typed transforms with uncertainty. No more "base_link" collisions.

Spatial Discovery & Coverage

Participants announce what they sense and where. Consumers query by spatial region. Late joiners discover the network without manual configuration.

Multi-Source Fusion Support

Source provenance, uncertainty, entity correlation. A fusion service consumes Detection3D from N sources and publishes FusedTrack — without knowing the sources' internals.

Map & Twin Lifecycle

Maps go from BUILDING to OPTIMIZING to STABLE. Inter-map alignments carry evidence and revision numbers. Zone state tracks occupancy in real time.

Real-Time DDS Transport

Built on OMG DDS with configurable QoS — BEST_EFFORT for high-rate sensors, RELIABLE for detections, TRANSIENT_LOCAL for metadata. Peer-to-peer, no central broker required.

Ecosystem Bridges — Meet Every Community Where They Are

MCAP Recorder

Record multi-source spatial streams. Replay for re-analysis. Ingest into ML training pipelines (LeRobot, Open X-Embodiment). Visualize in Foxglove.

ROS 2 Bridge

Translate sensor_msgs and vision_msgs. Operator-scoped UUIDv5 frames solve tf2 collisions. Separate DDS domains — zero interference with your robot stack.

MQTT Bridge

Edge-to-cloud over Mosquitto or AWS IoT Core. QoS mapping, retained messages for metadata, per-operator topic policies. Loop prevention built in.

WebSocket Bridge

Client-driven subscriptions with glob patterns. Topic discovery. Rate limiting. Bidirectional — browser apps can publish back. Built-in debug dashboard.

One-Button Deploy — Laptop to Cloud

docker compose up runs locally in 30 seconds — synthetic multi-operator intersection data, fusion service, web dashboard. ./deploy.sh deploys the same stack to AWS Fargate in 5 minutes — ALB with WebSocket, all four bridges, ~$2.50/day. Same Docker image, same DDS domain, same dashboard.

SpatialDDS 1.6 Specification

14 profiles · 60+ IDL types · 214 conformance checks across 5 public datasets · Core, sensing, discovery, anchors, mapping, semantics, events, and provisional profiles for RF beam and radio fingerprinting.